RealImpact: A Dataset of Impact Sound Fields for Real Objects

CVPR 2023 (Highlight)

Abstract

Objects make unique sounds under different perturbations, environment conditions, and poses relative to the listener. While prior works have modeled impact sounds and sound propagation in simulation, we lack a standard dataset of impact sound fields of real objects for audio-visual learning and calibration of the sim-to-real gap. We present RealImpact, a large-scale dataset of real object impact sounds recorded under controlled conditions. RealImpact contains 150,000 recordings of impact sounds of 50 everyday objects with detailed annotations, including their impact locations, microphone locations, contact force profiles, material labels, and RGBD images. We make preliminary attempts to use our dataset as a reference to current simulation methods for estimating object impact sounds that match the real world. Moreover, we demonstrate the usefulness of our dataset as a testbed for acoustic and audio-visual learning via the evaluation of two benchmark tasks, including listener location classification and visual acoustic matching.

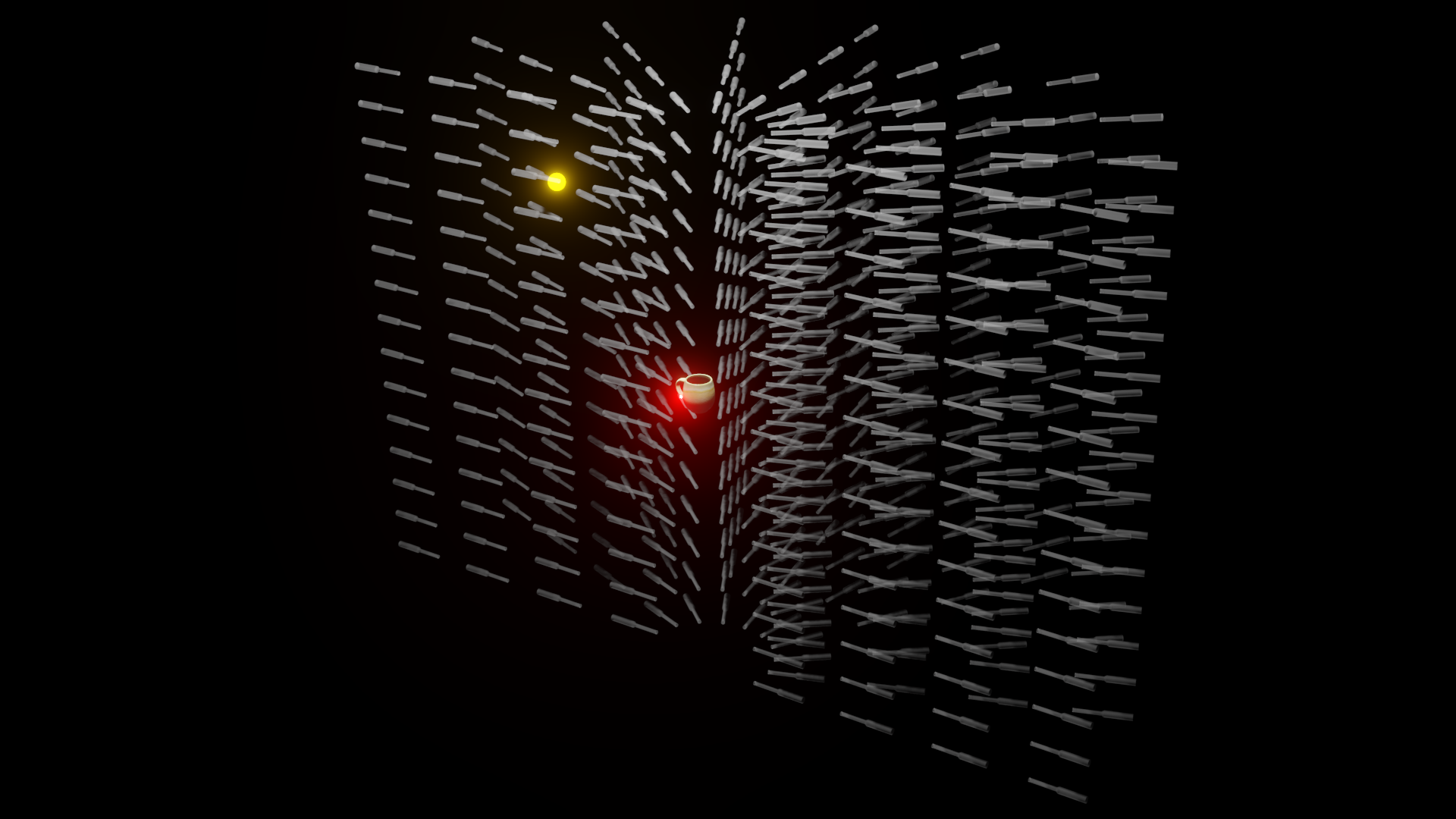

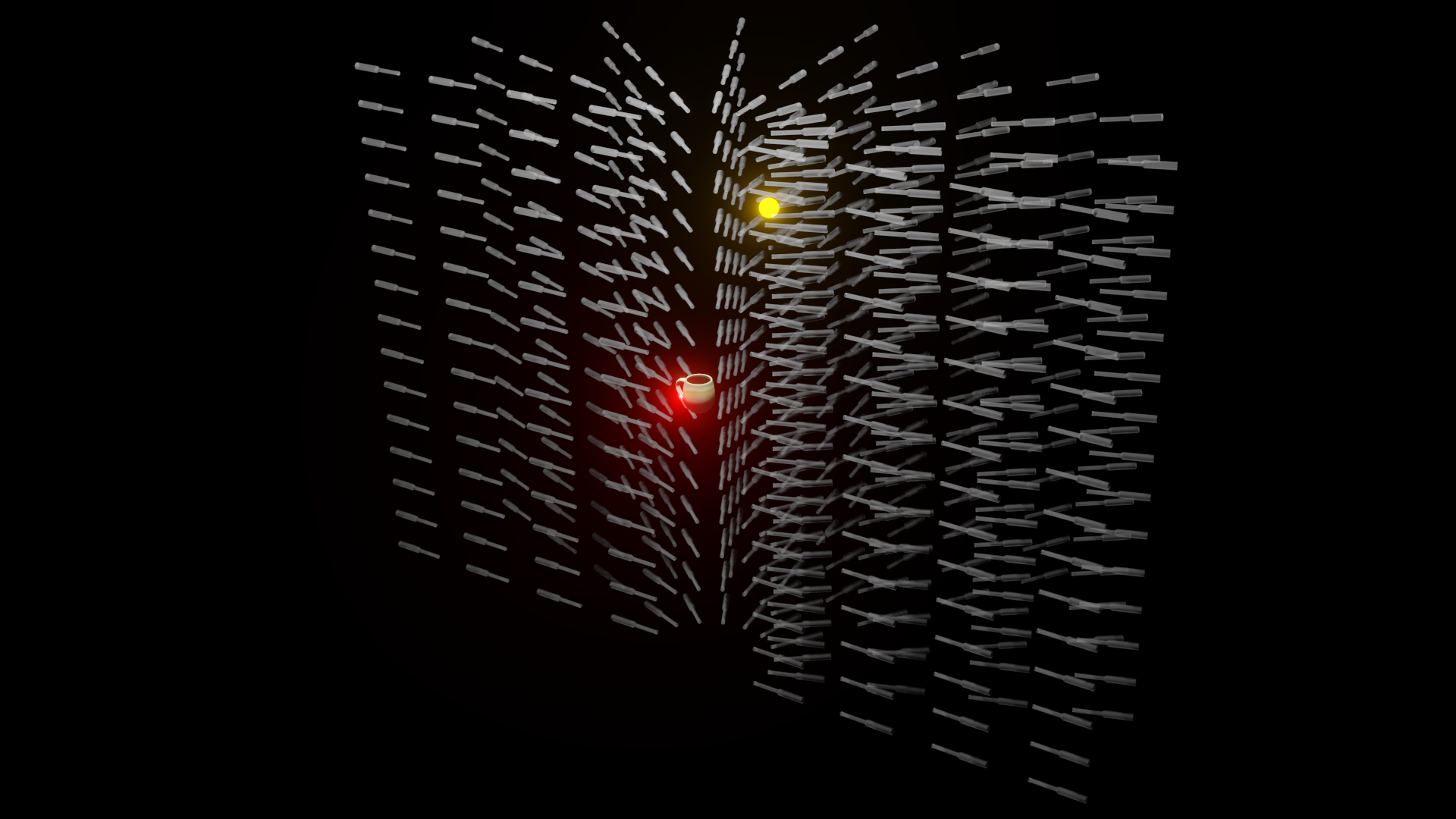

Sound Field Demo

Recording Setup

We use custom mechanism to repeatably automate flicking an impact hammer at the object with minimal noise (above). We strike the object repeatedly at the same vertex, moving a stack of 15 measurement microphones on a gantry between strikes to 10 azimuth angles and 4 distances per angle, for a total of 600 distinct microphone locations in the sound field of the object's impact sound (below).

Video

BibTex

@inproceedings{clarke2023realimpact,

title={RealImpact: A Dataset of Impact Sound Fields for Real Objects},

author={Samuel Clarke and Ruohan Gao and Mason Wang and Mark Rau and Julia Xu and Jui-Hsien Wang and Doug L. James and Jiajun Wu},

booktitle={Proceedings of the IEEE International Conference on Computer Vision and Pattern Recognition},

year={2023}

}